[This post is part of a short series on Character Engine and what we’re doing at Spirit AI. I’m writing these posts with IF and interactive narrative folks in mind, but more general-audience versions of the same content are also appearing on Spirit’s Medium account. Follow us there if you’re interested in hearing regularly about what Spirit is up to.]

Character Engine is designed for two kinds of interaction: it can either generate input options for the player (as seen in Restless), or it can take natural language input, where the user is typing or using a speech-to-text system.

That natural language approach becomes useful for games in AR or VR where more conventional controls would really get in the way. It’s also good for games that are using a chatbot style of interaction, as though you were chatting to a friend on Facebook; and a lot of interactive audio projects deployed on platforms like Alexa use spoken input.

There are plenty of business applications for natural language understanding, and a lot of the big tech players — IBM, Google, Amazon, Microsoft, Facebook — offer some sort of API that will take a sentence or two of user input (like “book a plane to Geneva on March 1”) and return a breakdown of recognized intents (like “book air travel”) and entities (“March 1”, “Geneva”). If you prefer an open source approach over going to one of those companies, RASA is worth a look.

For people who are used to parser interactive fiction, there’s some conceptual overlap with how you might set up parsing for an action: we’re still essentially identifying what the command might be and which in-scope nouns plug into that system. In contrast with a parser, though, these systems typically use machine learning and pre-trained language models to allow them to guess what the user means, rather than requiring the user to say exactly the right string of words. There are trade-offs to this. Parsers are frustrating for a lot of users. Intent recognition systems sometimes produce unexpected answers if they think they’ve understood the player, but really haven’t. In both cases, there’s an art to refining the system to make it as friendly as it can be, detect and guide the user when they say something that doesn’t make sense, and so on.

Some natural language services also let the developer design entire dialogue flows to collect information from the user and feed it into responses — usually interchanges of a few tens of sentences, designed to serve a repetitive, predictable transaction, and lighten the load on human customer service agents.

Those approaches can struggle a bit more when it comes to building a character who is part of an ongoing story, who has a developing relationship with the player or user, and who uses vocabulary differently each time.

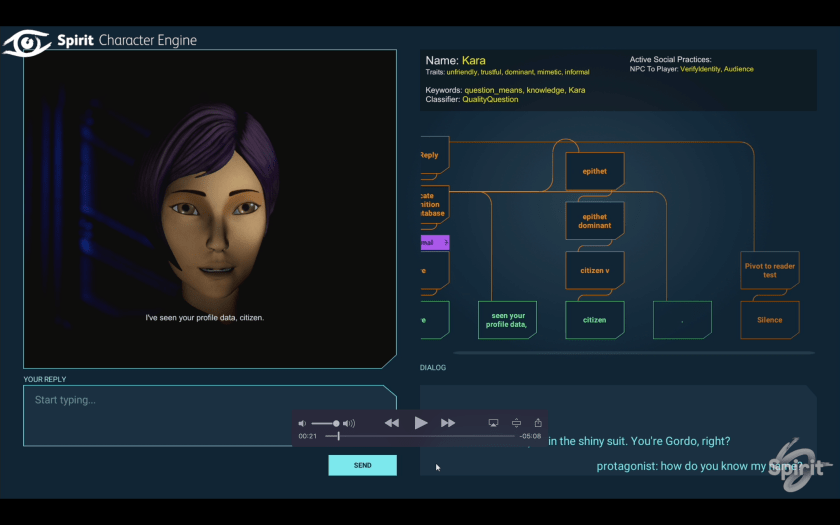

That’s exactly where Character Engine can naturally provide a bit more support. We’re tracking character knowledge and personality traits. We’re doing text generation to build output that varies both randomly and in response to lots of details of the simulated world. And because dialogue in Character Engine is expressed in terms of scenes of interaction, making it easier to maintain large and complex flows where the same input should be understood differently at different times.

We don’t want to be in a race with the providers of existing NLU solutions, though. What we want to do is facilitate author-friendly, narrative-rich writing options that tie into whatever happens to be the current state of the art in language understanding. So here’s a bit about how we do that:

Mix and match third-party intent systems with custom Spirit classifiers. Character Engine can plug into one or more external APIs, if you want characters who respond to pre-trained intents from some other system. You can also use classifiers we’ve trained at Spirit, which look for types of question (if you want to be able to interrogate a character about lore) or for various kinds of social interaction.

Or, if you’re savvy about your NLU methods and you’d rather build your own classifiers, you can do that as well, as long as what you build is returning data according to our format standards.

That means we’re agnostic about how your model is implemented. Got a state of the art TensorFlow classifier to plug in? Cool. Built something in Python that uses some regular expressions to determine whether the dialogue matches what you’re looking for? No problem. Want to deploy classifiers for Arabic or Mandarin even though Spirit hasn’t directly tackled those languages yet? Also doable.

If you wanted, you could build a set of classifiers that recognized standard text adventure commands like in the Inform library and hook those up to Character Engine, and use them either alone or in conjunction with more chat-focused understanding.

Use selected custom classifiers offline. Some of Spirit’s classifiers can be used offline rather than via an online service, so it’s possible to build some natural language experiences that don’t require the user to be constantly connected to the internet.

Work with inputs other than language. There are other triggers and inputs that some developers might want to work with besides verbal input. In a room-scale VR scenario, they might want to respond to the player approaching a character. In interactive audio, they might want to trigger a new response if the player has been silent for a long time. Elements like this can be used to trigger dialogue responses from characters, either alone or in combination with verbal input.

Detect things other than intent. Maybe instead of recognizing what the player is saying, you’re more interested in how it’s being said. Is the sentiment good or bad? Is the vocabulary formal or informal, polite or toxic? Classifiers for those aspects of language can run alongside the rest, and give you an opportunity to respond to performances of anger, dominance, or friendliness.

Author without having to memorize everything about your NLU system first. To create a conversation move in the Character Engine tool, you type a line of sample dialogue that you want to match, and the tool adds metadata to show you what classifiers and entities would correspond to that dialogue. You can then hand-tweak the results, add additional restrictions, and more.