[Most months, I devote the second Tuesday of the month to a mailbag post that answers a question someone’s sent to me. I’m still doing one of those in March, but it will come out in two weeks’ time.

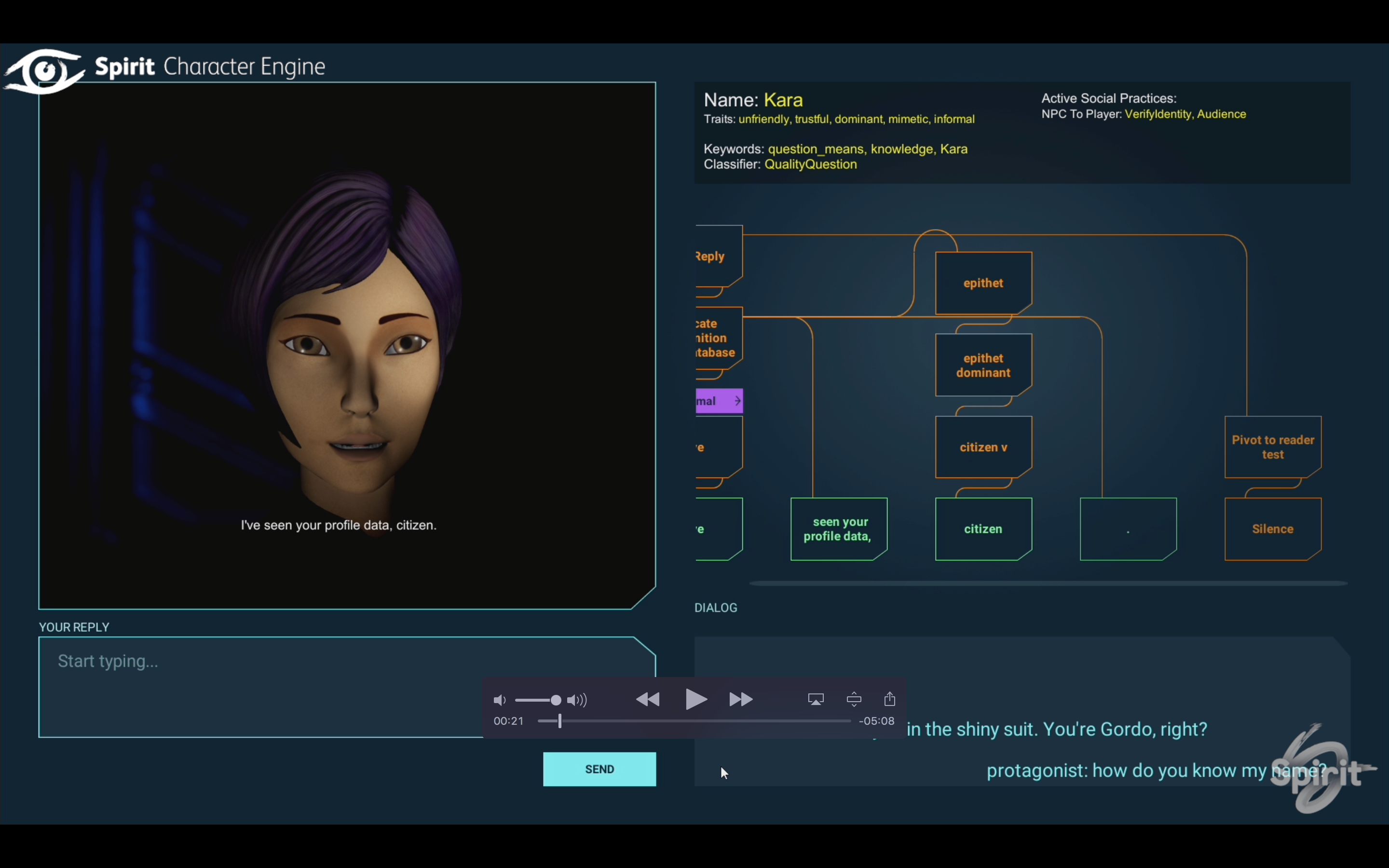

Instead, this post is part of a short series on Character Engine and what we’re doing at Spirit AI. I’m writing these posts with IF and interactive narrative folks in mind, but more general-audience versions of the same content are also appearing on Spirit’s Medium account. Follow us there if you’re interested in hearing regularly about what Spirit is up to.]

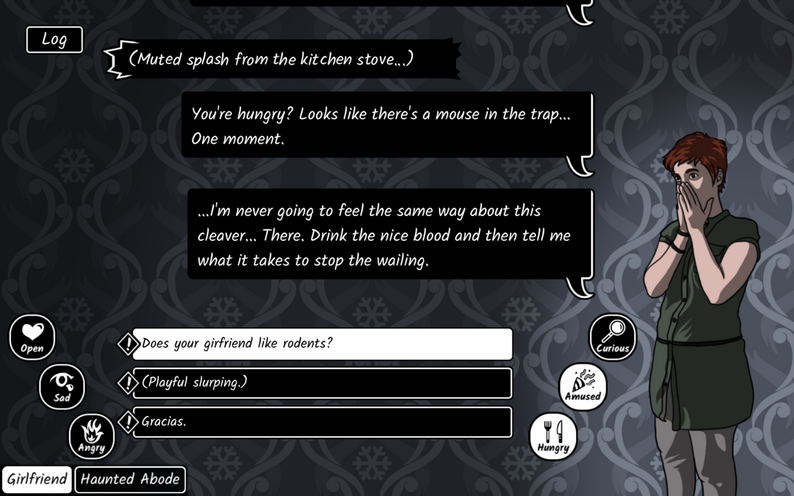

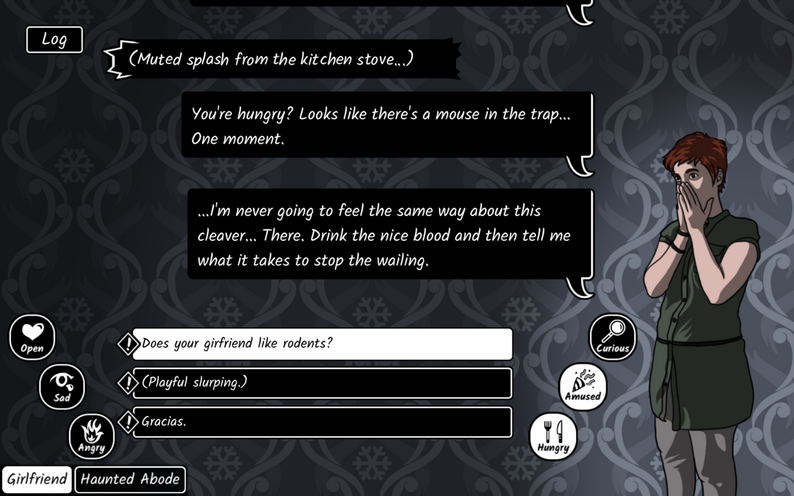

In January, I published a talk about conversation as gameplay, and in particular my ECTOCOMP game jam piece Restless. There, I talked about using Character Engine to give the player more expressive ability. They can tune their dialogue to be open, scary, vulnerable, aggressive, playful; they can pick input topics that they want to focus on.

I’m now revisiting Restless to address some of the flaws I identified in that piece, to give it a better tutorial introduction, polish its use of dynamic text, and add some depth to the content. Tea-Powered has created some additional art, so we can better signal gradations of character emotion. And I’m also refining some of the mechanics under the hood, particularly those that deal with gradual changes in character emotion.

What I’m going for in terms of the design:

- perceivable consequence:

- all of the player’s actions should have some effect on the outcome of the story, even if just by moving stats in one direction or another

- the NPC’s emotional state should be apparent to the player, communicated through art, words, and actions

- some areas of the NPC’s emotional state space should trigger narrative progression, by having the character get angry, leave, reveal new information, go back to sleep, etc

- intentionality:

- the NPC’s reactions should be consistent and predictable enough that the player can choose what to do and be rewarded by an expected outcome, even if there is an additional unexpected consequence

- the player should have some warning when the NPC is approaching a state that will trigger a significant reaction

- hysteresis:

- the NPC should not rapidly cycle back and forth between two emotional states in response to opposed actions from the player; the history of interactions should matter

- varied narrative intensity and pacing

- not all player actions should be of equivalent efficacy; some actions should be more important, memorable, or high-stakes

- it should not be possible to achieve an important result by grinding repetition of an unimportant action

That last point possibly deserves a little bit of expansion. One of the issues with stat-based relationship tracking in games is that it can mean that you could do a lot of banal minor services for a character, and work your way to a point where you’ve maxed out your affinity and now they want to marry you.

This is bad for multiple reasons. One, it’s unrealistic; humans don’t work like that. Two, it’s boring gameplay and boring story. Doing minor favors over and over feels like a chore, from a gameplay perspective. And narratively, there’s no sense of rising stakes, or risk, or the thrill of the relationship becoming more intense.

One way to resolve this would be to throw away the consequences of player action after a certain point. If you’re already at 50% affinity, paying one more compliment to the character does nothing at all. Now you can’t grind your way to romance through compliments: problem solved! Except that this goes against the earlier design principle that all player actions should have consequences of some kind.

So, instead, what we have to do is turn off the player’s ability to do minor actions when we’re in major consequence territory. Compliment away… while your relationship is still in trivial flirt zone. But work your way up to where things are getting serious, and the available affinity moves also get more serious, more freighted. And just having those “bigger” moves popping up in the choice menu should communicate to the player, along with the character art, that we’re in a more important situation.

Fortunately, all of that is pretty doable, by restricting dialogue lines to particular ranges of the emotional space.

This work is still in progress — I’ll post when the new version of Restless is generally available.