This is a continuation of an earlier mailbag answer about AI research that touches on dialogue and story generation. As before, I’m picking a few points of interest, summarizing highlights, and then linking through to the detailed research.

This one is about a couple of areas of natural language processing and generation, as well as sentiment understanding, relevant to how we might realize stories and dialogue with particular surface features and characteristics.

Transferring text style

Style transfer is familiar in image manipulation, and there are loads of consumer-facing applications and websites that let you make style changes to your own photographs. Textual style transfer is a more challenging problem. How might you express the same information, but in different wording, representing a different authorial manner? Alter the sentiment of the text to make it more positive or negative? Translate complex language to something more basic, or vice versa? Capture the distinctive prose characteristics of a well-known author or a specific era? Indeed, looked at the right way, translation from one human language into another can be regarded as a form of style transfer.

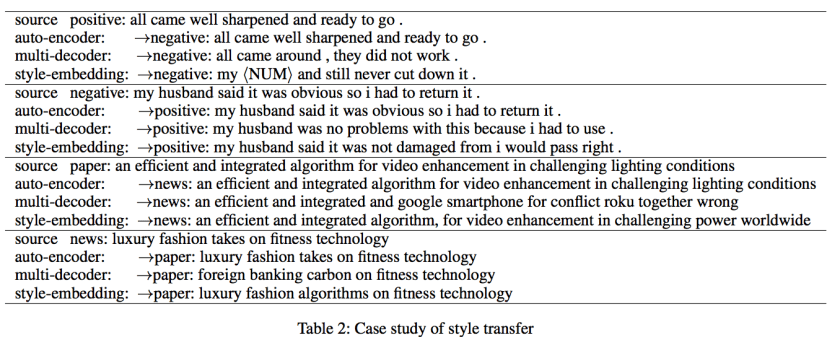

Style Transfer in Text: Exploration and Evaluation is an overview of why text style transfer is harder than image style transfer — lack of data with parallel content being a significant issue. The paper also proposes metrics of transfer strength and content preservation to determine the quality of text style transfers. Initial results, though, don’t yet look good enough to use in real-world applications where we might demand high accuracy from the output:

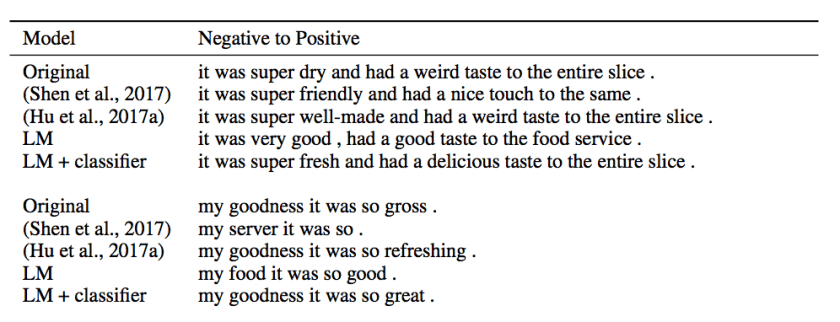

Style Transfer from Non-Parallel Text by Cross-Alignment (Shen et al) proposes methods to deal with the lack of parallel content and establishes several challenges around sentiment transfer and decipherment that might serve as a proving ground for style transfer.

Unsupervised Text Style Transfer Using Language Models as Discriminators builds on the foregoing. It proposes an alternative to generative adversarial networks using binary classifiers. Their LM+classifier outputs make better word substitution choices than the previous examples:

Fighting Offensive Language on Social Media with Unsupervised Text Style Transfer focuses specifically on taking offensive examples from Twitter and Reddit and translating the sentences into others that are more socially acceptable. this approach sets it apart from a lot of previous work on ML-based text classification that simply seeks to identify offensive language in social media postings.

The results here, like the results on story generation above, are fascinating while at the same time demonstrating how far the state of the art has to go in this space. Result sentences sometimes lose the meaning of the originals — “bros before money” does not mean the same thing as “bros before hoes” — and in some places they are less effective than a simple word redaction and replacement approach.

(As a sidebar: this approach assumes that all offensive utterances could be turned into inoffensive ones with just a change of wording and no loss of meaning — but “bros before hoes” implies that men are capable of friendship and solidarity while women are always nothing more than transactionally-motivated sex objects. There is no possible way of phrasing that idea that would render it harmless.)

Recognizing sentiment in chat or spoken text

A companion to the problem of being able to generate prose in a particular mood or style is the problem of being able to recognize those elements in text that we take in.

Sentiment analysis at its most basic is about trying to tell whether a piece of writing is overall positive or negative about the thing that it’s describing, and one of its main commercial applications as been to analyze huge numbers of reviews online in order to detect whether a given company’s products are liked or disliked by consumers. There’s a lot more we might ask from sentiment analysis, though — the ability to pick out more nuances than just “good” vs “bad”; the ability to detect ironic or sarcastic utterances, which is sometimes hard even for humans; the ability to act on shorter pieces of text.

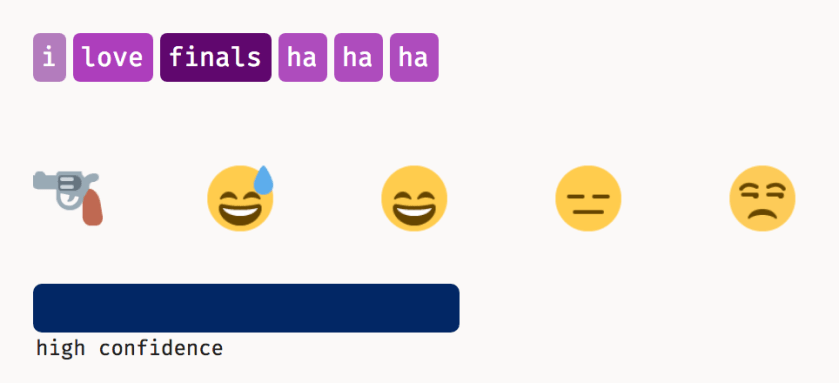

Deepmoji is a project using large quantities of Twitter data to correlate emoji with tweet content. The results are often significantly better than other sentiment analysis sorts of models (if we read the emoji as representing different types of sentiment). Sometimes the system has obviously recognized some topical content as well.

Impressively, this model is sometimes able to distinguish between sentences that are formally almost the same but where a human reader would recognize one as ironic. Here I am loving donuts, probably sincerely:

But if I claim to love finals? Deepmoji gives that one the side-eye.

Applying personality and emotion to dialogue

I’ve also written in the past about some less machine learning-based tools and methods for applying personality and emotion to dialogue, as well as research into how dialogue communicates the state of the speaker. References through the link.

Here is an older (2014) post about procedural text tools in the IF world specifically, and here is one on rendering world models in text. Both of these are directed more at interactive fiction authors than at AI researchers.

One thought on “Mailbag: AI Research on Dialogue and Story Generation (Part 3)”