Events

August 16 is the next meeting of the Seattle Area IF Meetup.

August 23-24 is Reality Escape Con, an online, free convention about room escape games, organized by the same people who are behind the Room Escape Artist blog.

September 5 is the next meeting of the SF Bay Area IF Meetup.

October 3-4 is the announced weekend for Roguelike Celebration 2020. The event is moving to a virtual model this year. More specifics about the event can be found here.

Contests

IFComp 2020 is still accepting entries for another two weeks. Authors should register their intent to enter by September 1. The entries themselves are due September 28.

IFComp 2020 is still accepting entries for another two weeks. Authors should register their intent to enter by September 1. The entries themselves are due September 28.

IntroComp, meanwhile, has already passed the deadline for submissions, but you can play the games and vote on them until August 31.

IntroComp, meanwhile, has already passed the deadline for submissions, but you can play the games and vote on them until August 31.

And inkjam 2020 has just recently concluded, with a number of games written in ink. The winners are still online to play.

Releases

Wide Island is a Twine piece by Draconic Chipmunk. It relies heavily on the technique of text expansion: a few linked words will expand into a much longer passage, which itself contains further expansions. Important information about the protagonist, setting, and situation are buried in different parts of the narrative, and the structure means that you might encounter them in any order. The effect reminded me of the telescopic narration in Lime Ergot, where new details constantly encourage the reader to recontextualise what has come before.

I had been reading for several minutes before discovering that the main character was a man with a wife and child. For lack of other information, I’d initially pictured someone demographically more like myself — but that shift felt like an intended part of the reading experience.

There were a few things that surprised me; for instance, several of the links seemed to offer more information on one topic but in fact opened out to talk about something different. But that relative lack of readerly agency also felt appropriate here. Overall, an interesting experiment in hypertext construction. (If this technique interests you, see also stretchtext.)

Thanks to Petter Sjölund, Dan Fabulich, 2lindell, Ed King, jackk225, Kevin Lo, nosferatu-if, OtherOlly, Sabe Jones, Sukil Etxenike, and thehatless, as well as long-term contributions by Dannii Willis and Andrew Plotkin, Counterfeit Monkey is now available in Release 9(!). There is a change log, and the game can be played in-browser complete with the map and all the assorted goodies.

Thanks to Petter Sjölund, Dan Fabulich, 2lindell, Ed King, jackk225, Kevin Lo, nosferatu-if, OtherOlly, Sabe Jones, Sukil Etxenike, and thehatless, as well as long-term contributions by Dannii Willis and Andrew Plotkin, Counterfeit Monkey is now available in Release 9(!). There is a change log, and the game can be played in-browser complete with the map and all the assorted goodies.

AI and Text Generation

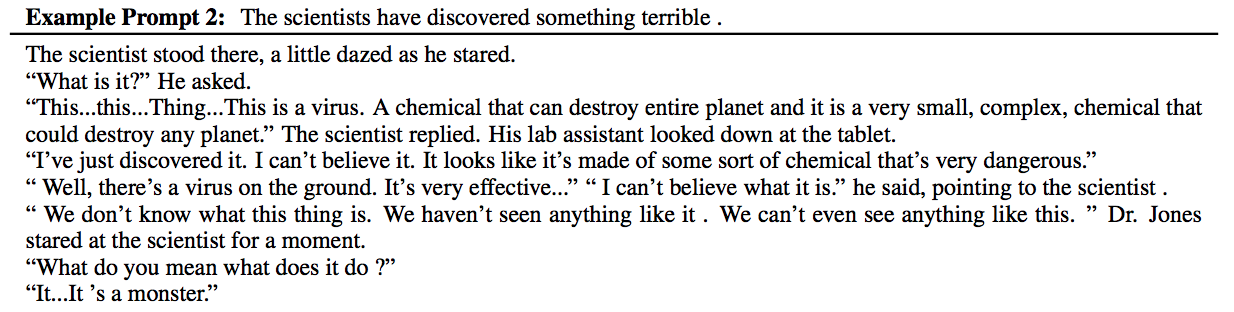

GPT-3 has been available to a limited group of people for a couple of months now, and interesting applications are starting to appear, including a blog that fooled a number of human readers.

Nick Walton, the creator of the AI Dungeon project that used GPT-2, has now set up a GPT-3 version that requires a subscription to access. (Once you have access, you’ll need to go to the settings panel and switch over to the “Dragon” model to activate it, as well.)

On my trials so far, it’s given a reasonably coherent but often sort of conservative performance:

The Dragon model also adds a feature that explicitly logs quests and tracks whether it thinks you’ve completed them or not — more of an attempt to track world state than we saw in the earlier versions of AI Dungeon.

You can also try priming the system with a prompt of your own; it took Counterfeit Monkey in a surprising direction with some torch-carrying sewer-dwellers.