Last month I wrote a bit about text generation and generated narratives overall. This month, I’ve been looking more at parser games — games that typically are distinguished by (among other things) having an expressive (if not very discoverable) mode of input along with a complex world model.

My own first parser IF projects were very interested in that complexity. I liked the sensation of control that came from manipulating a detailed imaginary world, and the richness of describing it. And part of the promise of a complex world model (though not always realized in practice) was the idea that it might let players come up with their own solutions to problems, solutions that weren’t explicitly anticipated by the author.

It might seem like these are two extremes of the IF world: parser games are sometimes seen as niche and old-school, so much so that when I ran June’s London IF Meetup focused on Inform, we had some participants asking if I would start the session by introducing what parser IF is.

Meanwhile, generative text is sometimes not interactive at all. It is used for explorations that may seem high-concept, or else like they’re mostly of technical interest, in that they push on the boundaries of current text-related technology. (See also Andrew Plotkin’s project using machine learning to generate imaginary IF titles. Yes, as an intfiction poster suggested, that’s something you could also do with an older Markov implementation, but that particular exercise was an exercise in applying tech to this goal.)

There’s a tighter alignment between these types of project than might initially appear. Bruno Dias writes about using generative prose over on Sub-Q magazine. And Liza Daly has written about what a world model can do to make generated prose better, more coherent or more compelling.

I’ve been wrestling with how to render my modeled worlds in text since the beginning. When I started writing IF, in the late 90s, I was very interested in simulation challenges: ropes, fire, liquids, Polaroid photographs. These were annoying problems partly because they required objects that were rendered in multiple parts, but connected (the two ends of the rope might be tied to different things!); or objects that might be divided an arbitrary number of times (the rope could be cut, the liquid could be partially poured out of the teapot into a cup); or objects that might change their state in complicated ways (the liquid could be mixed with other liquids to make new things, the fire could destroy an object leaving only its metal components behind).

Polaroids were a problem because you had to retain the description of the object(s) photographed, and come up with a parseable name for the photograph that would distinguish it fully. So e.g. “the photo of the burning log” might need to be distinct form “the photo of the burnt log”. This is all doable, much more doable than it used to be, because back in the day if you wanted that kind of variable parsing associated with an object in Inform 6, you had to write a special name-parsing routine for that object, and it could get pretty fiddly.

Also, no one takes Polaroids any more. So, as authors, we’re off the hook.

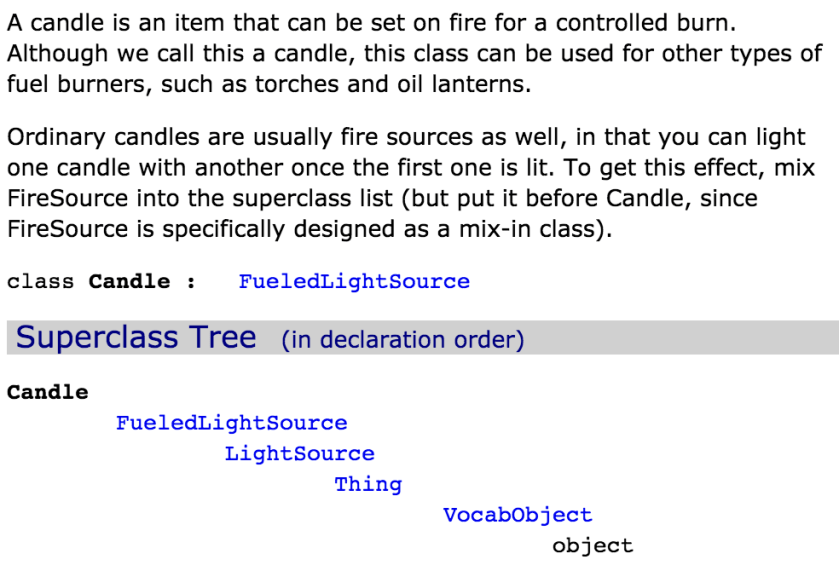

TADS 3 has the deepest standard library of any IF system out there, in this regard. This snippet defining the Candle class may give a bit of the sense of what TADS 3 is modeling out of the box:

And this is not a unique example. TADS 3 provides for concepts such as “HighNestedRoom”, an elevated space inside a larger area, or “NumberedDial” for the sort of dial you might have on the front of an oven.

A big part of the challenge was around describing those things fully enough to the player, in natural ways, and then in parsing back the response. Inform and TADS already do quite a lot of prose generation, albeit of a fairly mechanical kind, to produce descriptions like

On the table, you can see a pot (in which is a cooking chicken) and a lamp (providing light).

And TADS 3 in fact provides some fairly sophisticated ways to string together descriptions if the player takes multiple steps at a time. NORTH might produce a response like

You unlock and open the iron-bound door, then step through.

…where the system has first detected that the door needs to be opened, that it needs to be unlocked, that you do have the key required to do that unlocking, that it can successfully fire all the relevant implicit actions, and that it can then collate the action descriptions back together into a single, reasonably compact sentence. (Indeed, the introduction to the TADS 3 object library offers an argument for why simulation and storytelling aren’t fundamentally at odds with one another.)

Building on the default libraries — either to make descriptions more fluid and writerly, or to account for world state beyond the default model — tends to involve a bit more text generation work. Here is an article I wrote in 2014 about some of the common methods and applications of generating text in (mostly) parser games.

These sorts of problems might seem to be very different from the challenges solved by the procedural text systems underlying Parrigues or Voyageur. The parser versions typically start with the world model first, and derive a series of facts about that model that are then systematically reported to the player. My Inform extensions Room Description Control and Tailored Room Description (which I used extensively in Counterfeit Monkey) are designed to do discourse planning: what can the player see, in theory? Of those things, are there any that should be hidden or not described? Now we have the list of what we want to mention, what order do we want to apply to the description? Once we’ve ordered it, what exact language do we use to describe each piece? Are we encountering this object for the first time, and if so, is there special introductory text that we should associate with it?

In contrast, Parrigues (and Voyageur as well, I believe) works from the surface in, starting with a list of the types of sentences it wants to write and filling in the world model as needed in order to supply those sentences with particular detail.

Additional resources:

- Improv, Bruno Dias’ JavaScript generative text library with tagging; Bruno wrote a tutorial for this system as part of last year’s ProcJAM

- Tracery, Kate Compton’s novice-friendly generative text system

- James Ryan’s Expressionist project

- Latest versions of Room Description Control and Tailored Room Description

- Return to Ditch Day is a game by Mike Roberts that explores a lot of the special functionality of the TADS 3 library

- Savoir-Faire and Metamorphoses are games of mine that particularly dig into the physical model, tracking size, shape, and material of objects

- PROCJAM runs yearly and is an opportunity for people to make new procedural projects and learn about other people’s. In recent years, it has been crowdfunded and produced talks and tutorials as well. The 2018 iteration of PROCJAM is fundraising now, so if you like, you can help bring it back for another year!

- A lot of what I do in my current job at Spirit AI focuses on bringing these same considerations — modeled behavior and thought, changes of speech pattern, rendering a model via text — into character interaction.

- “Writing in Collaboration with the System” is a 2014 article of mine on how the mechanic (including world model features in parser IF) can serve as a writing prompt.

One thought on “World Models Rendered in Text”